Note: This post is about analysing hate speech. The images below contain hateful terms.

The Sentinel Project’s release of Hatebase (www.hatebase.org) has led to legitimate questions about how the world’s largest database of hate speech can be used to analyse hateful language online, and even be used as a tool to prevent genocide. Justified criticisms include the fact that semantic analysis of speech is extraordinarily difficult to do with a medium such as Twitter, given the rampant casual use of hateful and derogatory language on it.

It’s important to remember a basic fact about information and intelligence. If we were only to collect sightings of hate terms and conduct zero analysis on these usages, it would simply be the collection of information. Intelligence however, is a value added product created when information undergoes analysis. This is where the information that Hatebase collects, the Sentinel Project’s analysts, and civil society actors like other NGOs come into play. Keep in mind that the Hatebase API is available for free to anyone to use for their own research. Therefore, the usefulness of Hatebase is limited only by our collective imaginations.

To illustrate one possible use of Hatebase and the data it collects, I have used the open source network analysis tool Gephi (https://gephi.org/), and have analysed just a fraction of the information that Hatebase contains. Remember: this is only one way to use Hatebase data. The results follow in the remainder of this post.

Purpose:

To demonstrate how a continually collected database of hate speech from Twitter can be analysed using network analysis tools to show the spread of hate terms through Twitter.

Methodology:

For the purposes of this exercise, tweets that occurred between April and June 2013 were pulled from Hatebase. A best effort attempt was made to comb the tweets and remove any that were tweets which were spam or were obviously superfluous.

Each tweet was then combed through to isolate the twitter usernames that were inside the tweet so that we were left with a complete path of the tweet: the user who sent it, and who he/she sent it to, or mentioned, or retweeted. For the purposes of this exercise, these individuals are “linked” to the source user, willingly or not. This is done to determine the spread of one hate term among users, from source to destination.

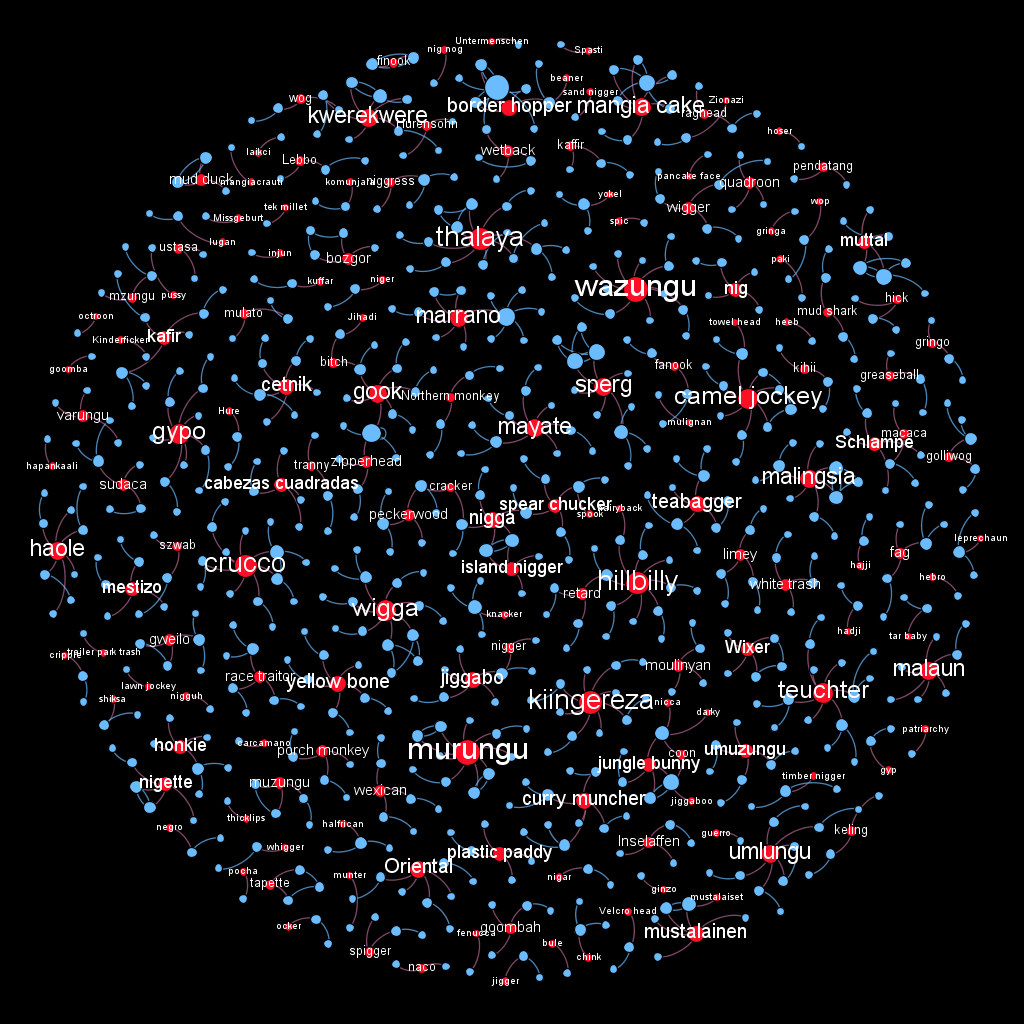

In Gephi, words were assigned as a node, as were users. The result being that a typical path will flow like this [hate word] > [originating user] > [target users]. Words were coloured red, users were coloured blue. This layout will mean that different individuals who are not otherwise linked by users, can be linked by usage of the same word, showing the relative strength of the word amongst various networks. This is useful for words that are specific to certain regions such as a Sentinel Project situation of concern.

Node sizes were set as dynamic, so that their sizes change based on the weight of the individual node. In essence, the more a node is an actor, the larger the node is.

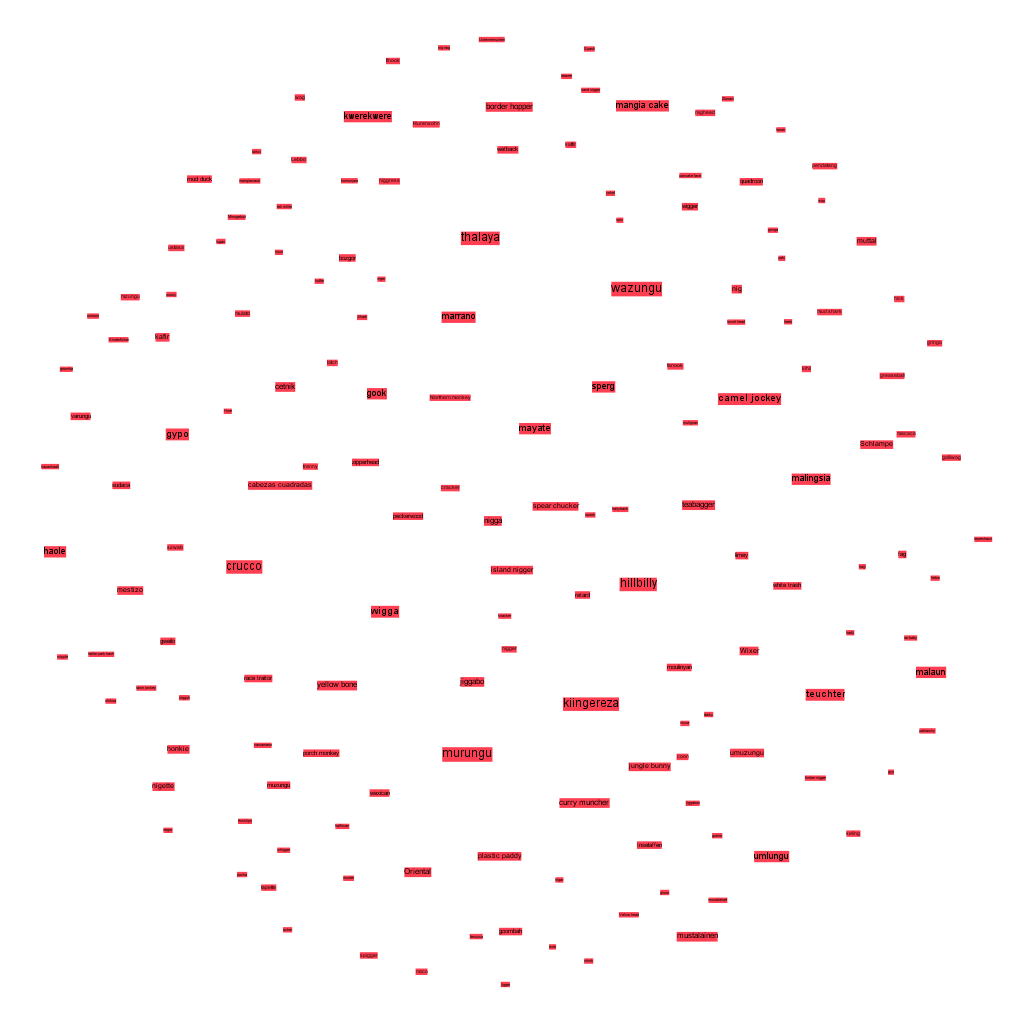

Word Cloud:

Words as Nodes and Users as Edges:

Conclusions:

Network analysis tools, combined with Hatebase, can useful in evaluating the spread or influence of hate speech during a select time period.

Not only does it allow for the simple metric showing clearly the most frequently used terms, it also allows us to determine what words are commonly used in conjunction with others.

Further, it allows for a more in-depth analysis to be done on the usage of a particular term. Is the term being used by one individual and then being directed at twenty more? Or are 100 individuals, who are not otherwise linked to each other, using the term and directing it at 10 more people each? We can see how a word is being used. The social network graph of a word that is used by one person (or a bot) and spammed to a hundred others appears distinct from a word that is spread amongst various individual users or networks. The social usage and “pick up”, or spread of this term among networks is important to see as well.

The longer that Hatebase collects this information, the better we will be able to determine the most common terms used amongst particular communities and the more we will be able to monitor the spread of words through Twitter. The application of this technique to Sentinel Project situations of concern could be useful in revealing those who are most frequently connected to hate speech in a region, as well as the users that are connected to them and so on.